CYBER! Please check all boxes before you get pwned

The talk analyzes systemic failures in IT security, specifically focusing on the insecure implementation of Germany's national electronic health record system (ePA) and the prevalence of insecure second-factor authentication mechanisms. It demonstrates how predictable identifiers and poor API design allow for unauthorized access to sensitive data, and how ransomware groups exploit basic security hygiene. The speaker argues that current compliance-driven security models fail to address real-world threats and advocates for risk-based, resilient security practices.

The Predictable Identifier Flaw Behind Germany’s National Health Record Breach

TLDR: Germany’s national electronic health record system (ePA) suffered a critical failure because it relied on predictable, incremental identifiers to access patient data. By simply iterating through card numbers, an attacker could retrieve insurance information and subsequently access sensitive health records without any secondary authentication. This research highlights the danger of relying on "security by obscurity" and demonstrates why compliance-driven design often ignores basic, exploitable flaws.

Security researchers often talk about "broken access control" as a theoretical risk, but rarely do we see a national-scale infrastructure project fail so spectacularly on the most basic level. The German electronic health record system, or ePA, was designed to connect over 70 million patients with 110,000 medical practices. The architects behind this system, a public-private partnership called gematik, decided that the best way to secure this massive data repository was to mandate the purchase of proprietary "connectors"—essentially glorified firewalls—and then rely on a complex, paper-heavy compliance framework.

The reality, as demonstrated by the Chaos Computer Club, is that the system’s security was effectively non-existent. The core vulnerability was not a sophisticated zero-day or a complex cryptographic bypass. It was a simple, predictable identifier scheme.

The Mechanics of the Identifier Flaw

The ePA system requires two pieces of information to grant a doctor access to a patient’s health record: the card number (printed on the back of the health card) and the insurance number (printed on the front). In a secure system, these would be treated as secrets or at least protected by a robust Identification and Authentication mechanism.

In the ePA implementation, the API was designed to return the insurance number if you provided a valid card number. Because these card numbers were assigned incrementally, an attacker could simply write a basic for-loop to iterate through the sequence. Once the attacker had the insurance number, they could query the API to pull the associated health records.

The following pseudocode illustrates just how trivial this exploitation path was for an attacker:

# Simple iteration to harvest insurance numbers

for i in range(1000000):

card_number = base_card_number + i

insurance_number = api.get_insurance_number(card_number)

if insurance_number:

health_record = api.dump_health_records(card_number, insurance_number)

save_to_disk(health_record)

This is a classic example of Broken Access Control. The system assumed that because the card number was physically printed on a card, it was "secure." It failed to account for the fact that in a digital environment, physical possession is not a prerequisite for access if the identifier is predictable and the API is exposed.

Why Compliance Fails Real-World Security

The most frustrating aspect of this research is the disconnect between the "security" mandated by the government and the actual security of the implementation. The system was audited by the Fraunhofer Institute, which produced a report confirming the system was "secure." This report was based on a mountain of policy documents and compliance checklists.

When the researchers pointed out that the system could be breached with a few lines of Python, the response from the vendors was not to fix the underlying architecture. Instead, they added more policies. They mandated that risk assessments for new vulnerabilities must be performed within 72 hours, even on weekends. They essentially tried to patch a broken design with more bureaucracy.

This is a recurring theme in our industry. We see organizations spend millions on compliance certifications while ignoring the fact that their APIs are leaking data because they used an auto-incrementing integer as a primary key. As a pentester, you will encounter this constantly. When you are on an engagement, stop looking at the compliance documentation and start looking at the API endpoints. If you see an ID that looks like 1001, 1002, 1003, you have already found your entry point.

Ransomware and the Myth of "AI-Ready" Security

The same lack of technical rigor applies to how organizations handle ransomware. We often see companies that have been hit by ransomware gangs like Black Basta express shock at the simplicity of the attack. They assume the attackers are nation-state actors with advanced toolsets.

In reality, these groups are often just using Metasploit or similar frameworks to exploit basic hygiene issues. They gain access, dump credentials, and move laterally. The "cryptographic implementation" they use is often flawed, relying on intermittent encryption to save time and resources. This is why, in some cases, it is possible to recover data without paying the ransom.

The industry’s obsession with "AI-ready" security or "active defense" is a distraction. When a CISO tells you they are implementing an AI-driven solution to detect threats, they are usually just trying to pass an audit. They are more afraid of the regulator than the attacker. If you fail an audit, you lose your job. If you get breached, you just hire a PR firm and a forensic team, and you keep your job.

What to Do Next

If you are a researcher or a pentester, your job is to expose these gaps. Do not let the "compliance" label intimidate you. If a system claims to be secure, test the identifiers. Test the API authorization. Test the assumptions.

We need to stop blaming users for clicking on links in phishing emails and start building systems that don't break when a user makes a mistake. If your infrastructure is so fragile that a single click on a malicious link leads to a full domain compromise, the problem is not the user. The problem is the architecture. Stop building systems that require perfect human behavior to remain secure. Start building systems that assume the user will be the weakest link and design accordingly.

Vulnerability Classes

Tools Used

Target Technologies

Attack Techniques

OWASP Categories

All Tags

Up Next From This Conference

From Skip-Kid to Cyber Kingpin: Preventing the Predictable Progression

Inside the Ransomware Machine

Who Gets to Point Fingers? Technical Capacity and International Accountability

Similar Talks

Surveilling the Masses with Wi-Fi Positioning Systems

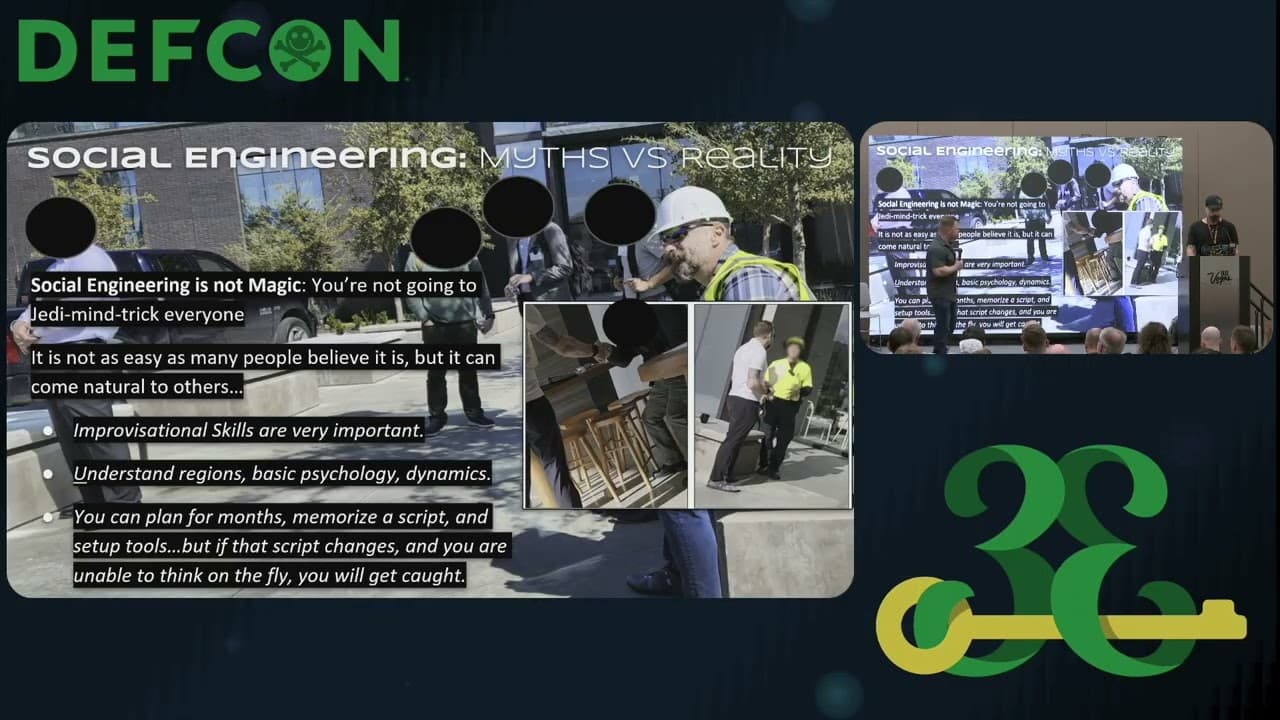

How Not to Do a Physical Security Penetration Test