How to Train Your Detection Dragon

This talk outlines a practical framework for building and scaling a security detection and response pipeline within a resource-constrained environment. It emphasizes the importance of log source prioritization, alert contextualization, and the use of standardized detection formats like Sigma to reduce noise. The speaker demonstrates how to integrate response playbooks directly into existing communication channels like Slack to streamline incident triage and reduce manual overhead.

Scaling Security Operations Without Breaking Your Team

TLDR: Building a detection and response pipeline often leads to alert fatigue and broken processes. By prioritizing log sources based on compliance and business impact, and integrating response workflows directly into communication tools like Slack, teams can effectively manage security events without needing massive budgets. This approach focuses on contextualizing alerts and empowering cross-functional teams to own their security responsibilities.

Security teams are drowning in noise. Every new tool added to the stack brings a fresh stream of logs, and every new log source brings a fresh set of alerts that nobody has the time to triage. When you are the first security hire or part of a small, lean team, you cannot afford to chase every phantom signal. You need a way to filter the signal from the noise that actually scales.

Prioritizing the Ingestion Pipeline

Most teams make the mistake of trying to ingest everything at once. This is a losing battle. Instead, you must prioritize your log sources based on what actually keeps the business running and what keeps you compliant. Start with systems that have direct compliance requirements, such as those subject to PCI DSS or SOC2 audits. These are your non-negotiables.

Once those are covered, move to business-critical systems. If an application is the lifeblood of your company, it needs visibility. Only after you have secured these tiers should you look at the rest of your infrastructure. If you are not sure where to start, look at the MITRE ATT&CK framework to map your current visibility against known adversary techniques. This helps you identify where your biggest blind spots are, rather than just ingesting logs for the sake of having data.

Contextualizing Alerts to Kill False Positives

Alerts without context are just notifications. If you receive an alert for a potential T1078 Valid Accounts event, you need to know immediately if that activity is normal for the user or the service account involved.

The most effective way to handle this is through log enrichment. When an alert triggers, it should already contain the metadata necessary for a decision. Is this user a developer? Are they accessing this resource from their usual IP range? By adding these details at the time of ingestion, you allow your team to make a triage decision in seconds rather than minutes.

Tools like Sigma are essential here. Sigma provides a vendor-agnostic way to write detection rules that can be deployed across different SIEMs. Because Sigma rules are just YAML files, they are easy to version control, update, and share. If you find a new way to detect T1548 Abuse Elevation Control Mechanism, you can push that rule to your entire fleet via a simple pull request.

Integrating Response into Existing Workflows

Stop forcing your team to log into a separate dashboard to see if something is on fire. If your team lives in Slack, your alerts should live in Slack. When a high-severity alert triggers, it should automatically open an incident channel, pull in the relevant logs, and tag the appropriate system owner.

This is where you build trust with other teams. If you only ever show up to tell them they did something wrong, you will be viewed as a blocker. If you show up with a specific, actionable alert and a proposed solution, you become a partner. For example, if you detect a misconfiguration in an AWS environment, don't just send a generic alert. Use a tool like Tracecat to automate the initial triage and provide the system owner with the exact steps to remediate the issue.

The Human Element of Scaling

Automation is not a replacement for human judgment. There will always be edge cases where an alert looks malicious but is actually a legitimate business process. When this happens, do not just silence the alert. Use it as an opportunity to refine your detection logic.

If you are constantly getting paged for the same false positive, your detection rule is broken. Fix the rule. If you are getting paged for something that is technically suspicious but operationally necessary, add an exception. This is a continuous process. You are not just training a detection engine; you are training your team to understand the difference between a real incident and a standard operational quirk.

Ultimately, the goal is to move from reactive firefighting to proactive risk management. You cannot stop every attack, but you can make it significantly harder for an adversary to move through your environment undetected. By keeping your processes simple, leveraging open-source standards like Sigma, and meeting your colleagues where they already work, you build a security culture that actually functions under pressure. If you are still manually triaging every single alert, you are already behind. Start by automating the easy stuff, and use the time you save to hunt for the threats that really matter.

Vulnerability Classes

Attack Techniques

OWASP Categories

Up Next From This Conference

Similar Talks

The Dark Side of Bug Bounty

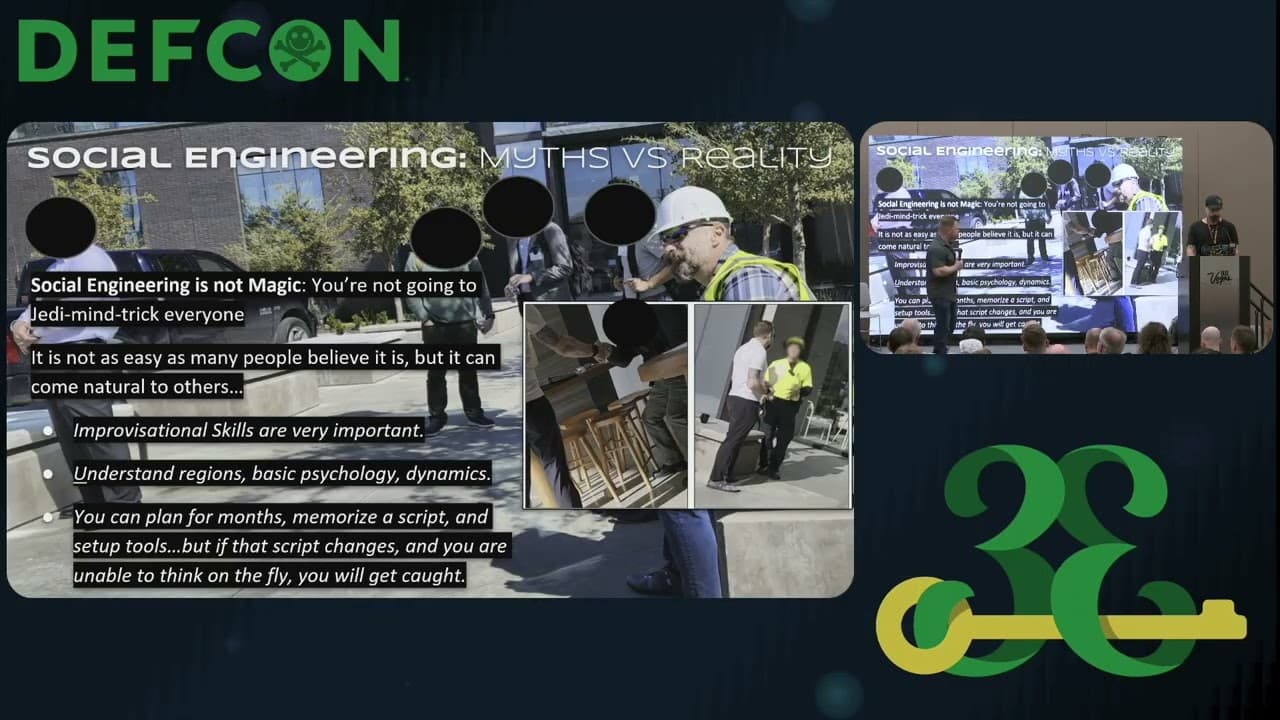

Social Engineering A.I. and Subverting H.I.