Autonomous Video Hunter: AI Agents for Real-Time OSINT

This talk demonstrates an autonomous AI agent framework designed to perform real-time OSINT by processing and analyzing video streams. The system leverages multimodal AI models to extract structured data, perform object detection, and conduct facial recognition from video content. The framework utilizes a modular architecture with specialized sub-agents for visual, facial, and content analysis to automate intelligence gathering. The speaker provides a proof-of-concept implementation that integrates with tools like Claude Desktop via the Model Context Protocol (MCP) to visualize and query findings.

Automating OSINT: How AI Agents Turn Video Streams into Actionable Intelligence

TLDR: Modern OSINT workflows are drowning in raw video data, but new autonomous AI agent frameworks can now process these streams in real-time to extract structured intelligence. By chaining multimodal models with the Model Context Protocol, researchers can automate object detection, facial recognition, and sentiment analysis across platforms like TikTok and Discord. This shift moves OSINT from manual, time-intensive review to automated, queryable intelligence gathering.

Video content is the new frontier for OSINT, yet most practitioners still treat it as a static artifact. We download a clip, watch it, maybe run a frame-by-frame analysis, and hope we didn't miss a detail. This manual approach fails when you are tracking a target across hours of footage or monitoring multiple social media channels simultaneously. The real-world risk is clear: while you are manually scrubbing through a single video, a target is generating hours of new, potentially incriminating content that remains unindexed and invisible to your investigation.

The Architecture of an Autonomous Video Hunter

Recent research presented at DEF CON 33 demonstrates a shift toward autonomous agents that treat video as a dynamic data source rather than a passive file. The framework relies on a modular pipeline that separates ingestion, processing, and analysis. Instead of building a monolithic tool, the approach uses LangGraph to orchestrate specialized sub-agents.

The pipeline starts with a video listener that scrapes content based on specific hashtags or keywords. Once the raw video is captured, it is passed to a video processor. This component is the heavy lifter, breaking the video into frames and extracting three distinct data streams: visual descriptions, automatic speech transcription, and on-screen text detection. By using these multimodal inputs, the system creates a dense, time-stamped index of what is happening in the video.

The technical core of this system is its ability to perform targeted extraction. Rather than just dumping a transcript, the agent uses a zero-shot object detector—specifically leveraging the Grounding DINO architecture—to identify arbitrary objects like logos, vehicles, or specific building facades. When the agent encounters a frame of interest, it triggers a secondary analysis:

# Conceptual snippet for triggering object detection

def detect_objects(frame, target_list):

results = grounding_dino.predict(frame, target_list)

if results.has_match:

return results.bounding_boxes

return None

Moving Beyond Manual Analysis

For a pentester or a bug bounty hunter, the utility of this framework lies in its ability to turn "noise" into "intelligence." Imagine you are performing a physical security assessment or a social engineering engagement. You need to identify employees, map out office layouts, or track the movement of a specific individual across social media posts.

By integrating this framework with Claude Desktop via the Model Context Protocol, you can query your investigation findings using natural language. You aren't just searching for a filename; you are asking the agent, "Show me all videos where the target is wearing a company badge and standing in front of a specific lobby logo." The agent then queries its local context store, which contains the structured metadata extracted from the video processing phase, and returns the relevant clips and timestamps.

The facial recognition component uses RetinaFace for detection and VGG-Face for matching. By biasing these models toward CPU-based inference, the framework remains portable enough to run on a standard laptop during an engagement, avoiding the need for expensive cloud GPU clusters.

The Defensive Reality

Defenders should recognize that this level of automation is no longer the domain of nation-state actors. If an OSINT researcher can automate the identification of sensitive information in video streams, so can a malicious actor looking to map your organization's physical security or identify key personnel.

The primary defense against this type of automated reconnaissance is strict control over the visual information your organization and employees share publicly. Metadata in video files, identifiable landmarks, and even subtle details like employee badges or internal signage are now easily indexed by autonomous agents. If you are in a security-sensitive role, assume that any video you post is being processed by an agent that can correlate it with other data points to build a comprehensive profile of your environment.

What to Investigate Next

The power of this approach is not in any single model, but in the orchestration of the pipeline. The autonomous video hunter proof-of-concept provides a solid foundation, but the real potential lies in customizing the sub-agents. If you are working on a long-term investigation, consider how you can extend the context store to include non-video data, such as geolocation logs or public records, to create a truly unified intelligence graph.

Stop treating video as a final product. Start treating it as a raw data stream that, when processed correctly, can provide the missing link in your next investigation. The tools to automate this are open-source and ready for deployment; the only remaining variable is how effectively you can define the mission for your agents.

Target Technologies

All Tags

Up Next From This Conference

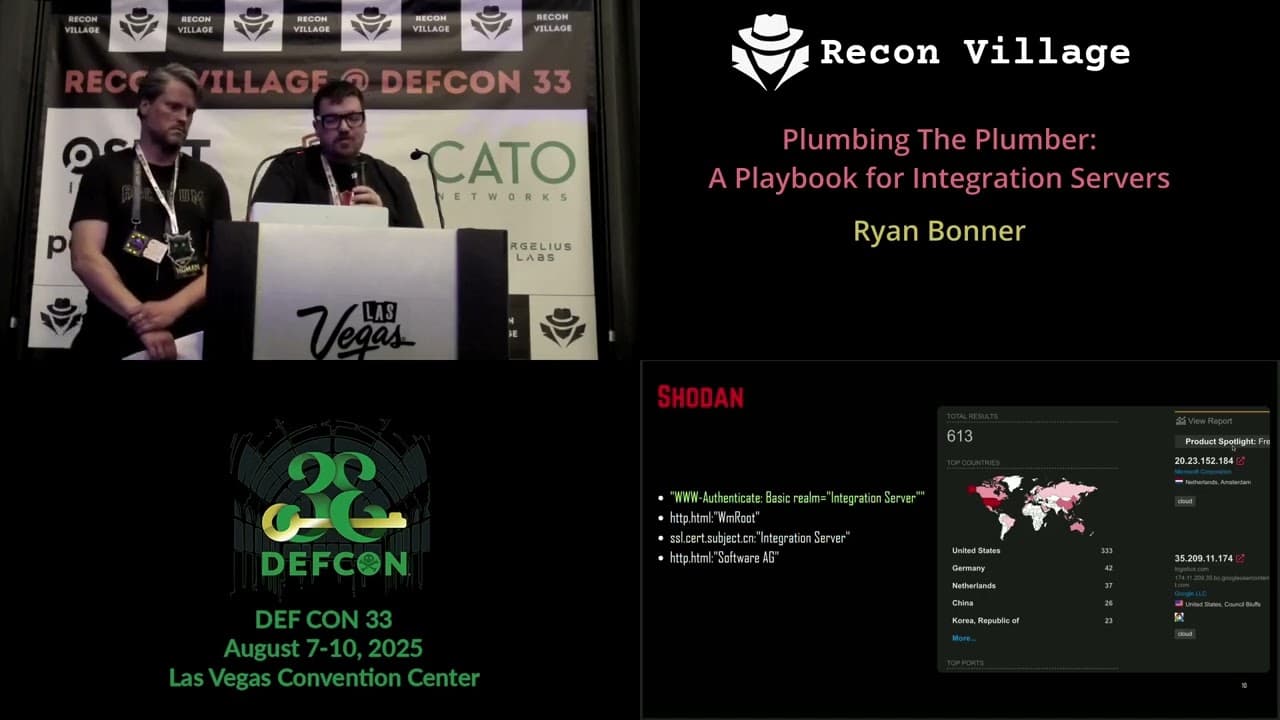

Plumbing The Plumber: A Playbook For Integration Servers

Mapping the Shadow War: From Estonia to Ukraine

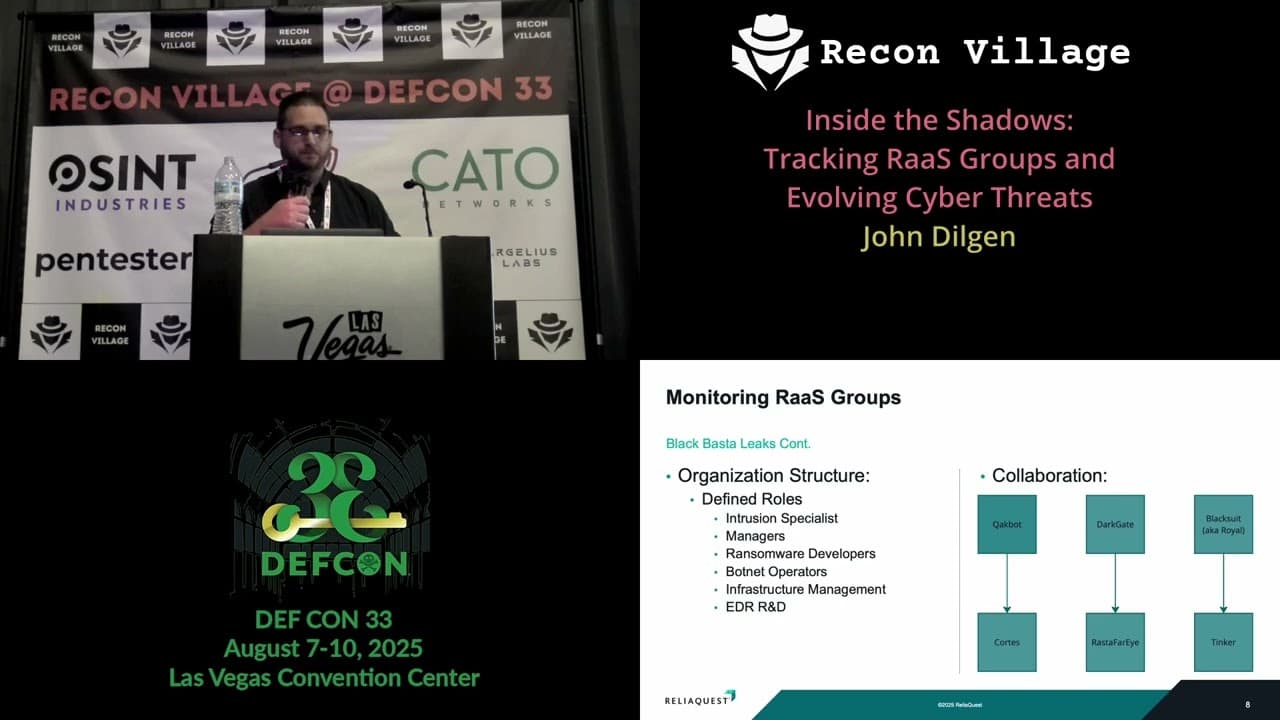

Inside the Shadows: Tracking RaaS Groups and Evolving Cyber Threats

Similar Talks

Inside the FBI's Secret Encrypted Phone Company 'Anom'

Kill List: Hacking an Assassination Site on the Dark Web