Building Local Knowledge Graphs for OSINT: Bypassing Rate Limits and Maintaining OPSEC

This talk demonstrates a methodology for building local, queryable knowledge graphs to bypass API rate limits and maintain operational security during OSINT investigations. By leveraging local LLMs and RDF-based data structures, investigators can aggregate and cross-reference data from multiple sources without triggering external monitoring or rate-limiting mechanisms. The speaker provides a practical workflow for data extraction, normalization, and graph construction, enabling more robust and repeatable analysis. A custom Rust-based tool is introduced to facilitate automated, privacy-conscious data collection.

Scraping at Scale: Building Local Knowledge Graphs to Bypass API Rate Limits

TLDR: Modern OSINT investigations often hit hard walls when API rate limits or anti-scraping protections block automated data collection. By shifting from live, query-based scraping to a local RDF-based knowledge graph, researchers can aggregate disparate data points and perform complex relationship analysis offline. This approach not only bypasses rate limits but also enables the use of local LLMs to extract structured insights from unstructured web content without leaking investigative intent.

Security researchers and bug bounty hunters are increasingly finding that the "easy" targets are gone. When you are performing reconnaissance on a large organization, you rarely get a clean, unauthenticated API endpoint that lets you dump the entire employee directory or organizational chart. Instead, you face aggressive rate limiting, WAFs, and dynamic content that requires a full browser stack to render. If you try to brute-force these defenses, you get blocked. If you try to use a standard LLM-as-a-service to parse the results, you risk tipping off the target about your specific areas of interest.

The research presented at DEF CON 33 by Donald Pellegrino offers a practical way to solve this by decoupling the collection phase from the analysis phase. Instead of querying a live service for every single data point, you build a local, queryable knowledge graph.

The Mechanics of Local Knowledge Graphs

At the core of this technique is the transition from a "query-response" model to a "data-ingestion" model. In a traditional setup, you might use a script to hit an endpoint 500 times to map out relationships between speakers at a conference. Each request is a potential trigger for a rate-limit block or a log entry in the target's SIEM.

By using Playwright with a headless Chromium instance, you can capture the full DOM, including the JavaScript-rendered elements that standard curl or wget requests miss. Once you have the raw HTML, you don't just store it as a flat file. You process it into an RDF (Resource Description Framework) triple store. This structure allows you to define entities and their relationships—for example, [Speaker] -> [SpeaksAt] -> [Conference].

Because this data is now local, you can run as many queries as you want against your own graph database without ever touching the target's infrastructure again. You are essentially building a private, offline mirror of the target's public-facing data.

Integrating Local LLMs for Data Extraction

The real power comes when you introduce local LLMs into the pipeline. Using tools like llama.cpp or LM Studio, you can run models on your own hardware. This is critical for OPSEC. When you send a prompt to a hosted model like GPT-4 or Claude, you are sending your investigative context to a third-party provider. If you are researching a sensitive target, that metadata is a liability.

With a local model, you can feed it the raw HTML you scraped and ask it to extract specific entities and relationships in a structured format like JSON-LD. You can then map these to your RDF schema. This allows you to turn messy, unstructured web pages into a clean, graph-based dataset.

Here is a simplified example of how you might structure a collection command using a custom Rust-based tool to handle the browser automation:

# Example of triggering a local collection process

./collect_tool --target "https://reconvillage.org/schedule" --output "./data/raw_html"

# Then, process the raw data into the triple store

./process_tool --input "./data/raw_html" --schema "foaf_ontology.json" --db "./graph.db"

Real-World Applicability for Pentesters

During a red team engagement or a deep-dive bug bounty hunt, you often need to map out an organization's internal structure, identify key personnel, or find hidden relationships between different business units. This methodology is perfect for that.

Imagine you are testing a portal that requires you to map out 50 different departments. Instead of hitting the portal 50 times and risking a lockout, you scrape the entire directory once, store it in your local graph, and then spend hours querying your local database to find the most interesting nodes. You can perform complex graph traversals—like finding the shortest path between a specific employee and a sensitive internal project—without the target ever knowing you are performing that analysis.

Defensive Considerations

Defenders need to recognize that this is a "low and slow" threat. Because the attacker is only hitting the site to collect the raw data and doing all the heavy lifting offline, traditional rate-limiting based on request frequency is less effective.

To counter this, organizations should focus on OWASP's Automated Threats to Web Applications, specifically focusing on detecting headless browser signatures and implementing more sophisticated challenges that require genuine user interaction. If you are a defender, look for patterns in your logs where a single IP or user agent is systematically crawling your site in a way that mimics a full browser render but doesn't follow typical human navigation patterns.

Ultimately, the goal of this research is to shift the advantage back to the investigator. By building your own local infrastructure, you stop being a slave to the target's API limitations and start controlling the pace of your own research. The next time you find yourself blocked by a rate limit, stop trying to bypass it and start building your own graph instead.

Vulnerability Classes

Target Technologies

Up Next From This Conference

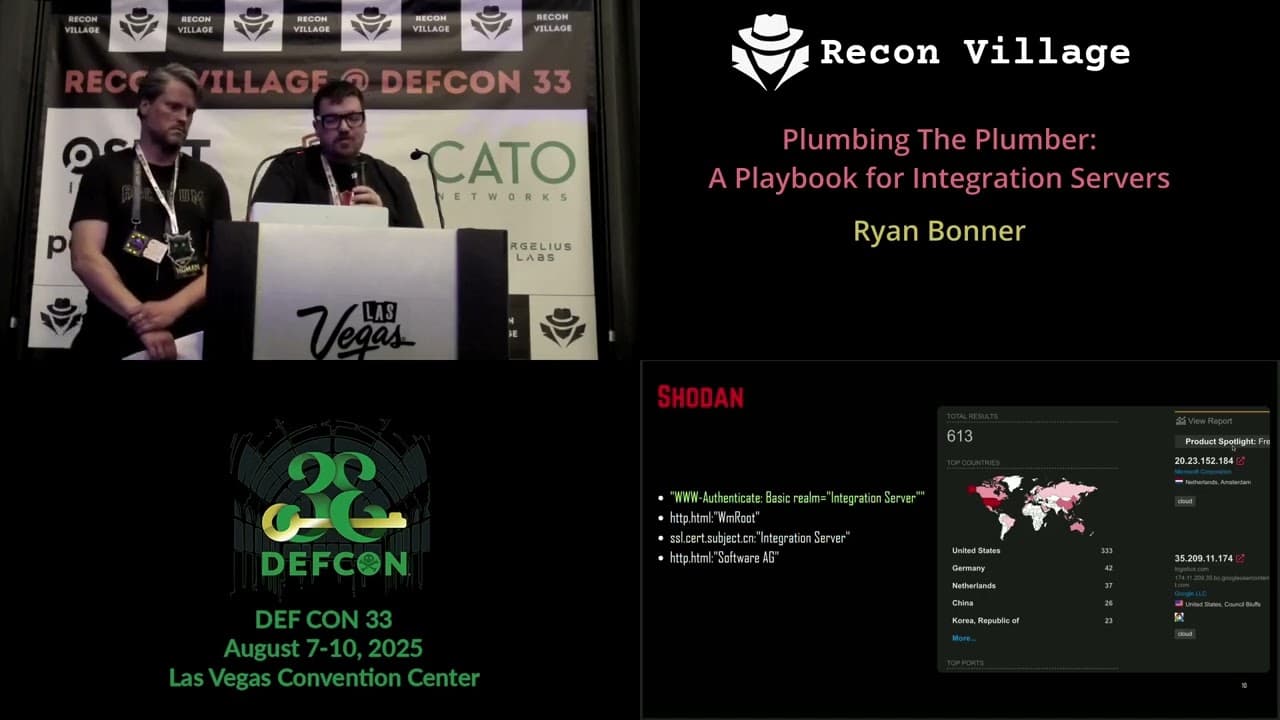

Plumbing The Plumber: A Playbook For Integration Servers

Mapping the Shadow War: From Estonia to Ukraine

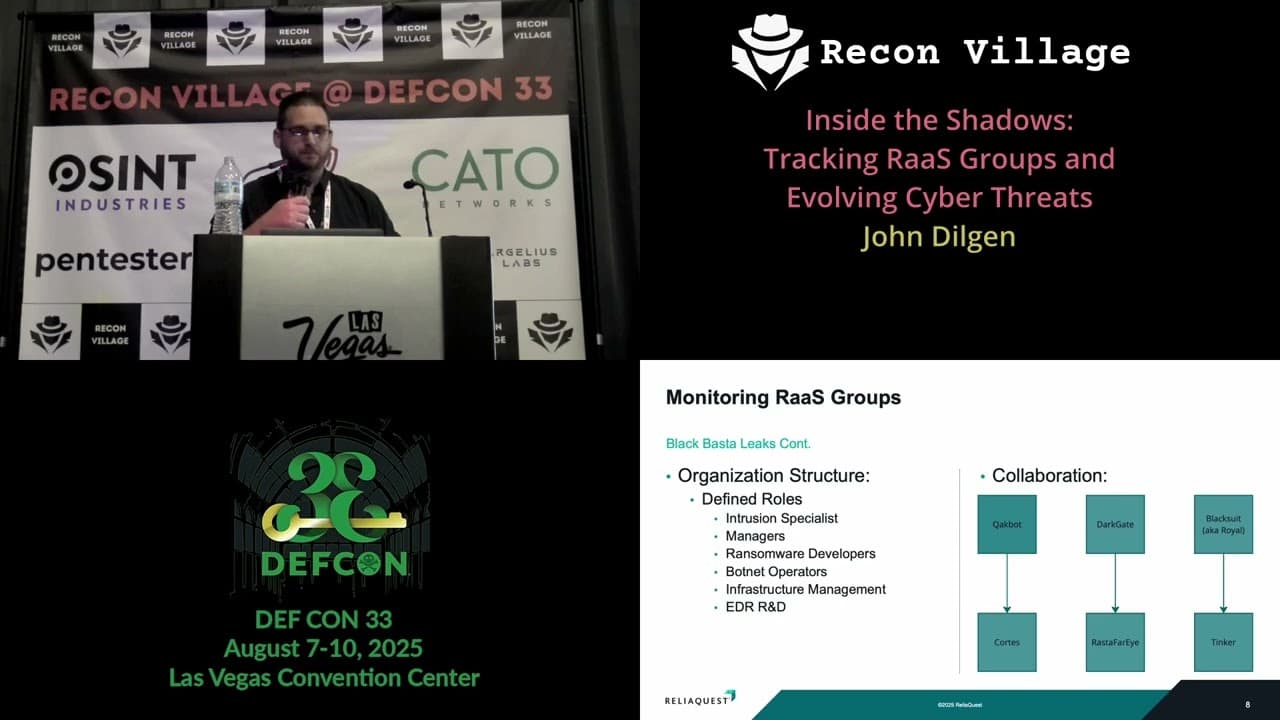

Inside the Shadows: Tracking RaaS Groups and Evolving Cyber Threats

Similar Talks

Inside the FBI's Secret Encrypted Phone Company 'Anom'

Kill List: Hacking an Assassination Site on the Dark Web