Robin: The Archaeologist of the Dark Web

This talk introduces Robin, an AI-powered OSINT tool designed to automate the collection, filtering, and analysis of data from the dark web. The tool leverages Large Language Models (LLMs) to refine search queries, scrape onion sites, and generate structured intelligence reports containing key artifacts like crypto addresses and threat actor aliases. It addresses the inefficiencies of manual dark web investigation by providing a unified CLI and web interface for complex research workflows. The presentation includes a demonstration of the tool's capability to process search results and produce actionable threat intelligence.

Automating Dark Web Recon with LLM-Powered Pipelines

TLDR: Manual dark web OSINT is a time-sink that often leads to fragmented, low-quality data. The Robin tool automates the entire lifecycle of dark web investigation by chaining LLM-based query refinement, automated scraping, and structured intelligence reporting. This approach allows researchers to pivot from a simple search term to a comprehensive threat report in minutes rather than hours.

Security researchers and penetration testers often treat dark web reconnaissance as a chore. It is a fragmented process: you start by querying a handful of search engines like Ahmia, manually verify if the onion links are still alive, scrape the content, and then spend hours parsing the output for anything resembling a lead. If you are tracking threat actors or monitoring for leaked credentials, this manual overhead is not just inefficient; it is a bottleneck that prevents you from actually analyzing the data.

The core problem is that most existing tools are disjointed. You have scanners, you have crawlers, and you have search engines, but you lack a unified pipeline that understands the context of your investigation. When you are hunting for information on a specific ransomware group, you do not need a list of five hundred random onion links. You need the specific artifacts that matter: crypto addresses, threat actor aliases, and PII.

The Architecture of Automated Intelligence

The Robin tool changes this by treating the investigation as a structured data pipeline rather than a series of manual tasks. It uses Large Language Models (LLMs) to bridge the gap between a human-readable query and the messy, unindexed reality of the dark web.

When you initiate a search, the tool does not just pass your string to a search engine. It first sends your query to an LLM to refine it. If you search for "ransomware," the LLM expands that into a set of targeted queries designed to hit the most relevant dark web forums and marketplaces. This step is critical because it filters out the noise before the crawler even touches a site.

Once the search results return, the tool performs a reachability check. A significant portion of onion links are dead or transient. By automating the verification process, you avoid wasting cycles on infrastructure that no longer exists. The final stage is the scraping and analysis. Instead of dumping raw HTML into a folder, the tool uses an LLM to extract specific entities. It looks for:

- Crypto wallet addresses

- Threat actor handles

- Email addresses and PII

- Mentions of specific vulnerabilities or target organizations

This structured output is then formatted into a markdown report, providing you with a clean, actionable summary of the findings.

Technical Implementation and Prompt Engineering

The power of this tool lies in its prompt flow. Because the model is not inherently trained on dark web threat intelligence, you have to guide it. The tool uses a multi-stage prompt approach to ensure the output remains useful.

For example, the "Generate Summary" prompt is designed to force the model to categorize artifacts. If you are running this on a local machine, you can use Ollama to handle the inference, keeping your sensitive research off third-party APIs. The command structure is straightforward:

robin -q "Black Basta ransomware" -t 10

This command triggers the pipeline, where the -t flag limits the number of threads or results to keep the analysis focused. The tool then outputs a report that includes the original query, the source links, and a categorized list of investigation artifacts.

Real-World Application for Pentesters

During a red team engagement or a targeted threat hunting exercise, time is your most limited resource. If you are tasked with identifying the infrastructure used by a specific threat actor, you can use this tool to automate the initial discovery phase. By providing a list of known actor aliases or specific malware strings, you can quickly map out the forums where these actors are active.

The impact of this is immediate. Instead of spending your first day on an engagement manually browsing forums, you can have a pipeline running in the background that aggregates and parses the latest posts. When you sit down to work, you are presented with a list of potential leads, crypto addresses, and infrastructure indicators that you can then verify.

Defensive Considerations

From a defensive perspective, this level of automation is a double-edged sword. While it helps security teams monitor for threats, it also lowers the barrier for adversaries to conduct reconnaissance against your organization. If you are a defender, you should be aware that your public-facing infrastructure is being indexed by increasingly sophisticated, automated tools.

Monitoring for unusual traffic patterns originating from known Tor exit nodes is standard practice, but it is rarely enough. You need to focus on your own digital footprint. If your internal assets or employee credentials appear in these automated reports, you need a process to identify the source of the leak. OWASP provides excellent guidance on threat modeling that can help you understand how these automated reconnaissance techniques might be used to target your specific environment.

The era of manual, artisanal dark web research is ending. If you are still spending your time copy-pasting links into a spreadsheet, you are working too hard. Start building pipelines that do the heavy lifting for you. The goal is not to replace the researcher, but to give the researcher the time to focus on the analysis that actually matters.

Tools Used

Target Technologies

Up Next From This Conference

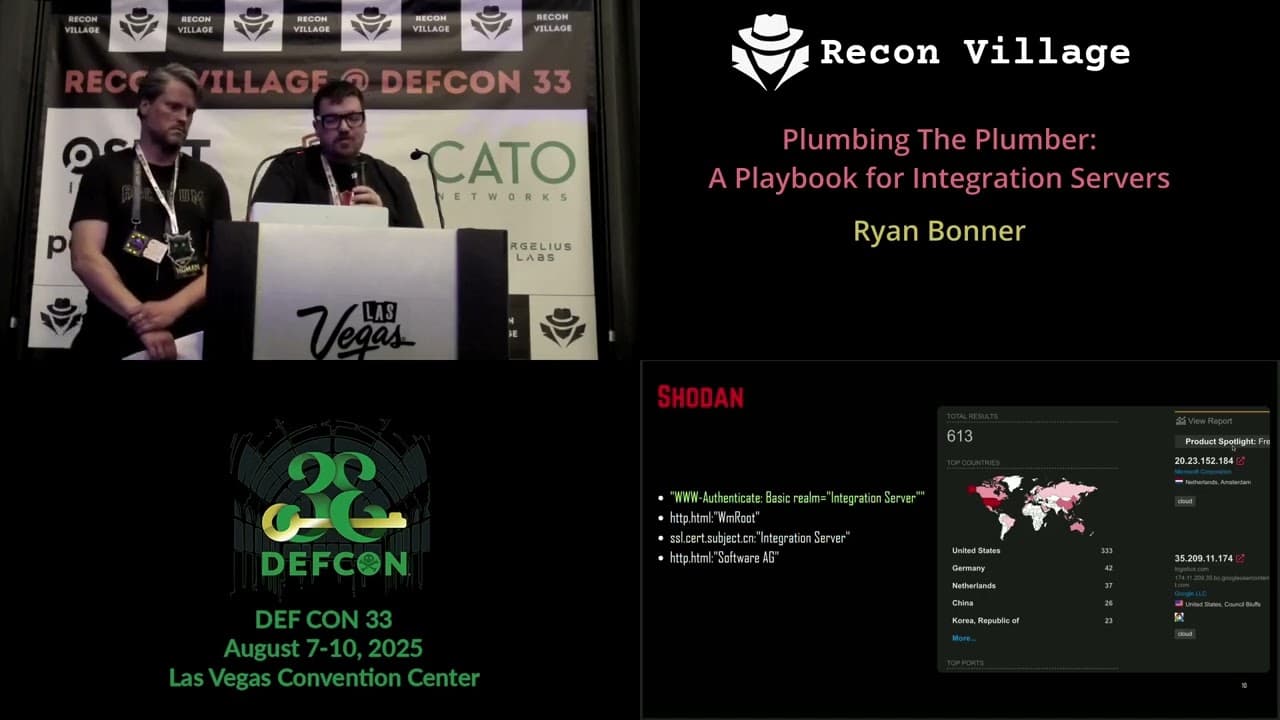

Plumbing The Plumber: A Playbook For Integration Servers

Mapping the Shadow War: From Estonia to Ukraine

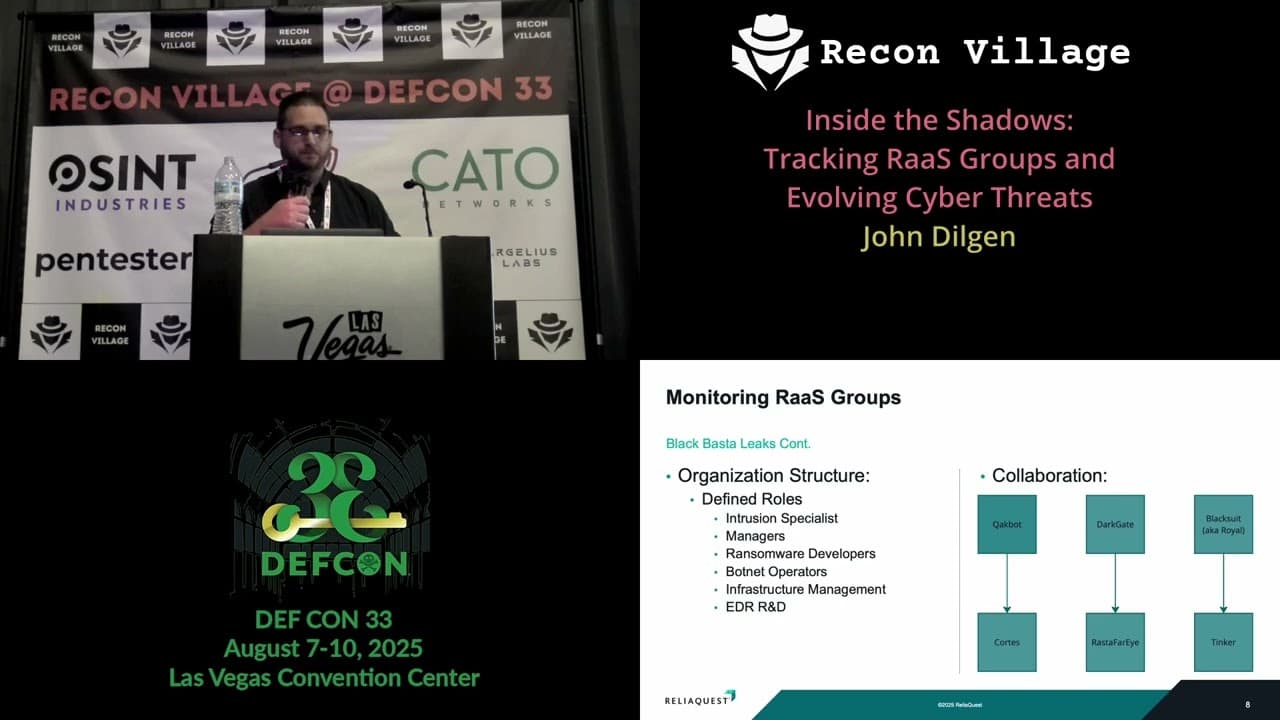

Inside the Shadows: Tracking RaaS Groups and Evolving Cyber Threats

Similar Talks

Inside the FBI's Secret Encrypted Phone Company 'Anom'

Kill List: Hacking an Assassination Site on the Dark Web