DEF CON 33 Recon Village - Autonomous Video Hunter AI Agents for Real Time OSINT - Kevin Dela Rosa

Description

Kevin Dela Rosa introduces the Autonomous Video Hunter, an AI agent system designed to automate OSINT by extracting structured intelligence from video content. The presentation demonstrates how multimodal AI can perform real-time facial recognition, logo matching, and contextual analysis across large video datasets.

The Future of OSINT: Building Autonomous Video Hunter AI Agents

In the modern digital landscape, the volume of video data generated daily is staggering. From TikTok clips and Reddit uploads to satellite imagery and public-facing cameras indexed on Shodan, the internet is awash in visual information. For Open Source Intelligence (OSINT) researchers, this represents a goldmine of data, yet it remains largely untapped due to the sheer manual effort required to analyze it. At DEF CON 33 Recon Village, Kevin Dela Rosa introduced a groundbreaking solution: the Autonomous Video Hunter. This AI agent system leverages multimodal models to automate the extraction of structured intelligence from raw video streams, transforming how we conduct surveillance, threat intelligence, and brand monitoring.

The Problem: Intelligence Trapped in Video

Traditional OSINT tools are excellent at indexing text and metadata, but they often struggle with the 'unstructured' nature of video. If you need to find a specific individual across hundreds of hours of social media footage or track the movement of a specific vehicle through a city, you are usually stuck watching the footage manually. This process is not only slow but prone to human error. Dela Rosa's research focuses on 'agentic systems'—AI that doesn't just process data but creates plans, executes tools, and synthesizes results autonomously. By giving AI the ability to 'see' and 'reason,' we can finally unlock the data trapped within these visual formats at scale.

Technical Deep Dive: The Agentic Architecture

Understanding the Vulnerability of Obscurity

The 'vulnerability' here isn't a software bug like a buffer overflow; it's the vulnerability of public data that was previously protected by the high cost of manual analysis. Autonomous Video Hunter removes that barrier. The system architecture is divided into four modular components:

- The Video Listener: This is the entry point. Using no-code tools like

Gumloop, researchers can scrape platforms like TikTok for specific hashtags or geo-tagged content. - The Video Processor: This stage involves indexing the video. It uses Vision Language Models (VLMs) to generate a dense, frame-by-frame description of the video’s content, transcribes speech, and extracts on-screen text.

- The Context Store: This is a searchable database where the indexed descriptions are stored, allowing the agent to perform rapid queries without re-processing the raw video files every time.

- The Video Analysis Agent: Built on the

LangGraphframework, this agent acts as the brain. It receives a natural language query (e.g., "Find where this person appears and tell me what they are doing"), creates a plan, and selects the right tools to execute that plan.

Step-by-Step Implementation and Tooling

To achieve high accuracy in specialized tasks, the agent calls upon several low-level computer vision libraries:

- Facial Recognition: The system uses

DeepFace, specifically theRetinaFacemodel for high-precision face detection andVGG-Facefor verifying identities against a reference image. This allows the agent to find specific targets in a crowd or across disparate video clips. - Logo and Image Matching: For finding static objects like brand logos or building facades, the system utilizes

OpenCV. It employsSIFT(Scale-Invariant Feature Transform) to create 128-dimensional vectors describing key points in an image. These are then matched using theRANSACalgorithm, which filters out noise to confirm if the match is legitimate. - Zero-Shot Detection: When a researcher needs to find an arbitrary object (like a "red backpack" or a "specific type of drone") that hasn't been specifically trained for, the system uses

OWL-ViT v2from Hugging Face. This allows for 'open-vocabulary' detection based on simple text prompts.

Real-Time Intelligence with MCP

One of the most innovative aspects of the presentation was the integration of the Model Context Protocol (MCP). By connecting the Video Hunter to Claude Desktop via MCP, Dela Rosa demonstrated real-time screen monitoring. This setup can 'watch' a researcher’s screen—for instance, a busy Discord channel or a live news feed—and provide instant summaries, sentiment analysis, and even knowledge graph visualizations of the data as it flows. This turns the AI from a forensic tool into a real-time intelligence assistant.

Mitigation & Defense for the New Era

As these tools become more accessible, the security landscape must adapt. For individuals and organizations, the 'security through obscurity' provided by the volume of internet data is disappearing.

- Operational Security (OPSEC): High-value targets must be aware that any video appearance—even in the background of a stranger's TikTok—can now be indexed and searched.

- Privacy Safeguards: Developers of these tools must implement ethical guardrails to prevent stalking or unauthorized surveillance, though the open-source nature of many underlying models makes this a challenge.

- Detection of AI Monitoring: Organizations may need to develop methods to detect when their public-facing video streams are being systematically scraped and analyzed by automated agents.

Conclusion & Key Takeaways

The Autonomous Video Hunter represents a paradigm shift in OSINT. By moving from manual scrubbing to agentic orchestration, researchers can process volumes of data that were previously impossible to manage. The key takeaway for security professionals is that the barrier to entry for high-level visual intelligence has been significantly lowered. Whether you are a bug hunter looking for leaked credentials in a developer's stream or a threat intelligence analyst tracking physical assets, the ability to build and deploy these autonomous agents will be a defining skill in the next generation of cybersecurity. The code is available on GitHub, and the tools are mostly CPU-friendly, making this the perfect time to start experimenting with agentic OSINT.

AI Summary

Kevin Dela Rosa, founder of Cloud Blue and an expert in multimodal AI, presents his research on Autonomous Video Hunter at DEF CON 33 Recon Village. The core problem addressed is that vast amounts of actionable intelligence are 'trapped' in video formats across social media, satellite feeds, and public cameras. Manually analyzing these videos is labor-intensive and unscalable for OSINT researchers. Dela Rosa proposes an agentic AI system that can 'see' and 'reason' about video content in real-time. The system's architecture consists of four primary stages: a Video Listener, a Video Processor, a Context Store, and a Video Analysis Agent. The 'Listener' acts as a scraper, gathering videos from platforms like TikTok or Reddit based on specific hashtags or locations. The 'Processor' indexes the video, using Vision Language Models (VLMs) to create dense descriptions of scenes, speech transcriptions, and text overlays. The 'Context Store' makes this data searchable. Finally, the 'Video Analysis Agent'—built using the LangGraph framework—orchestrates various specialized tools to answer user queries, such as 'Find every appearance of a specific celebrity' or 'Identify all locations where a specific logo appears.' Dela Rosa showcases several live demos. One involves identifying celebrity Will Smith in a dataset of 50 TikTok videos from Zurich. The agent successfully filters the videos, identifies the target using facial recognition, and synthesizes a markdown report detailing timestamps, emotional states, and the narrative context of the appearance. Another demo focuses on logo detection, using the Starbucks logo to find building facades and brand interactions across a video corpus. The agent provides an aggregate summary of how the logo was used, such as in promotional material or by employees. Technically, the system leverages a variety of specialized models. For facial recognition, it uses the DeepFace library with RetinaFace for detection and VGG-Face for verification. For logo and building facade matching, it utilizes OpenCV's SIFT (Scale-Invariant Feature Transform) descriptors combined with the RANSAC algorithm to ensure robust image matching. For 'zero-shot' object detection (finding arbitrary items without specific training), it uses the OWL-ViT v2 model. Notably, the speaker emphasizes that the proof-of-concept is designed to run on a standard laptop CPU to remain accessible for educational purposes. The talk concludes with a demonstration of the Model Context Protocol (MCP), connecting the Video Hunter to Claude Desktop. This allows for real-time monitoring of screen activity, such as Discord chats, and generating live visualizations or knowledge graphs of the ongoing discussion. Dela Rosa highlights that this technology can be applied to monitoring protests, tracking public figures, or government intelligence applications, fundamentally changing how OSINT is conducted in the age of generative AI.

More from this Playlist

DEF CON 33 Recon Village - Mapping the Shadow War From Estonia to Ukraine - Evgueni Erchov

DEF CON 33 Recon Village - How to Become One of Them: Deep Cover Ops - Sean Jones, Kaloyan Ivanov

DEF CON 33 Recon Village - Building Local Knowledge Graphs for OSINT - Donald Pellegrino

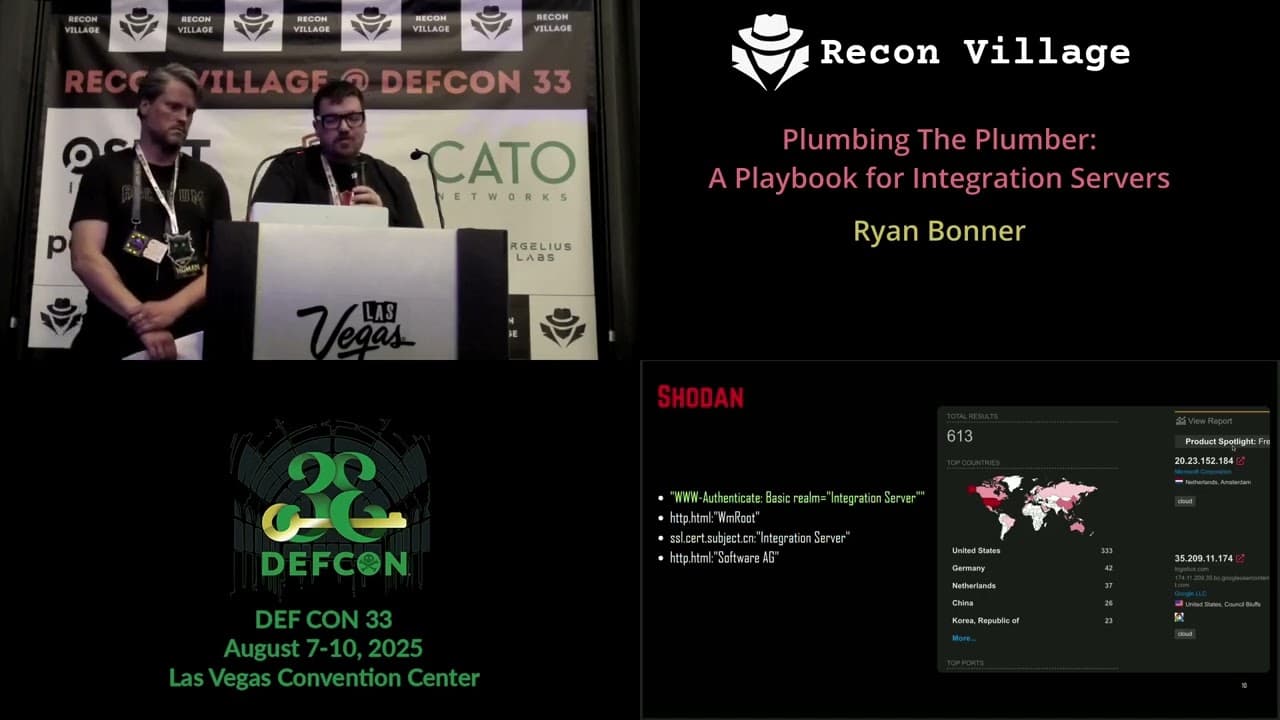

DEF CON 33 Recon Village - A Playbook for Integration Servers - Ryan Bonner, Guðmundur Karlsson