DEF CON 33 Recon Village - Building Local Knowledge Graphs for OSINT - Donald Pellegrino

Description

Dr. Donald Pellegrino presents a methodology for building local OSINT knowledge graphs using RDF and local LLMs to bypass external rate limits and protect operational security. The talk demonstrates how to automate the extraction of structured data from unstructured web sources while maintaining a private, queryable repository.

Title: Mastering OSINT: Building Local Knowledge Graphs to Bypass Rate Limits and Shield OPSEC. Introduction: In the fast-paced world of Open Source Intelligence (OSINT), the tools we rely on are often double-edged swords. Every query sent to a search engine and every prompt entered into a cloud-based LLM is a signal of interest that can be logged, analyzed, and potentially used against the investigator. Furthermore, the aggressive rate-limiting of modern web platforms often throttles deep research. Dr. Donald Pellegrino's DEF CON 33 Recon Village talk, 'Building Local Knowledge Graphs for OSINT,' provides a sophisticated blueprint for overcoming these hurdles. By shifting from external search to local graph-based analysis, investigators can reclaim their privacy and scale their efforts beyond the constraints of commercial APIs. This post explores the technical components of his methodology, from Rust-based scraping to local LLM integration. Background & Context: The traditional OSINT workflow relies heavily on live queries. This is problematic for two reasons: Integrity and Confidentiality. When you query a target on a specific platform, that platform (and its advertisers) knows exactly what you are looking for. This 'indicator of interest' is a major OPSEC leak. Additionally, 'search' is not 'analysis.' Simply finding data is only the first step; the real value lies in understanding the relationships between entities across multiple sources. Knowledge graphs, specifically those built on the Resource Description Framework (RDF), offer a solution. RDF allows us to represent data as 'triples' (Subject-Predicate-Object), creating a flexible web of information that is far more powerful than traditional relational databases for investigative work. Technical Deep Dive: Understanding the Technique. The core of Pellegrino's approach is the creation of a local 'triple store'—a database designed for graph data. By standardizing all collected information into RDF, an investigator can join data from a speaker list, a corporate registry, and a social media scrape into a single, unified view. This is achieved through 'Ontologies,' which are essentially schemas that define how entities (like people and organizations) relate to one another. Using a standard ontology like FOAF (Friend of a Friend) ensures that data from different analysts can be merged without manual reconfiguration. Step-by-Step Implementation. 1. Advanced Scraping with Rust and Tor: Traditional scraping tools fail on modern sites that use heavy JavaScript. Pellegrino recommends building custom collectors in Rust. Specifically, he highlights the Arty library, which allows Rust programs to communicate directly over the Tor network. This ensures that the collection process itself remains anonymous. Tools like Playwright and Chromium are used to render dynamic content, ensuring that no data is left behind in hidden iframes or lazy-loaded tabs. 2. Local Information Extraction: Once the raw HTML is collected, the next challenge is extracting salient entities. This is where Local LLMs come in. By running models like Qwen 30B locally using VLLM or Llama.cpp, investigators can process their data without sending it to OpenAI or Anthropic. These models can be prompted to 'extract all persons and organizations mentioned in this text and format them as RDF triples aligned with the FOAF ontology.' 3. Querying with SPARQL: With the data structured in a triple store, the investigator uses SPARQL (the SQL equivalent for graphs) to perform complex link analysis. For example, a single SPARQL query can identify all speakers at a conference whose parent companies were founded before 1950, even if that data came from two entirely different sources. Tools and Techniques: The project utilizes a 'Graph RAG' (Retrieval-Augmented Generation) approach. Unlike standard RAG, which performs a simple text search to find context for an LLM, Graph RAG uses the structure of the knowledge graph to provide more relevant, related entities to the model's context window. This allows for far more accurate and nuanced automated analysis. Mitigation & Defense: For defenders, understanding this methodology is crucial. It highlights that 'silent' scraping is becoming increasingly sophisticated. To detect such activities, organizations should look for anomalies in traffic patterns that suggest automated rendering (like Playwright signatures) even when coming through anonymization networks like Tor. For investigators, the best defense is a good offense: by maintaining a local, encrypted repository of intelligence, they minimize their interaction with the 'live' web, thereby reducing their digital footprint. Conclusion & Key Takeaways: The transition from manual searching to local knowledge graph construction is a game-changer for high-stakes OSINT. By leveraging Rust for collection, RDF for structure, and local LLMs for analysis, investigators can build a powerful, private intelligence engine. The key takeaway is repeatability: every piece of data you structure today becomes a building block for your analysis tomorrow. For those looking to implement this, the presenter has provided a public GitHub repository to get started with the Rust-based collection and RDF enrichment pipeline. Practice safely, and always consider the ethical implications of your data collection.

AI Summary

Dr. Donald Pellegrino's presentation at DEF CON 33 Recon Village addresses the critical challenges faced by modern OSINT investigators, specifically the limitations imposed by web service rate limits and the operational security (OPSEC) risks inherent in querying external search engines and cloud-based Large Language Models (LLMs). The central thesis is that investigators can achieve better results and higher security by building local knowledge graphs. The methodology utilizes the Resource Description Framework (RDF) as a canonical data format, allowing analysts to transform disparate data sources into a unified, queryable mathematical graph. Dr. Pellegrino explains that while traditional tools like Wget and Curl are often insufficient for modern, JavaScript-heavy websites, custom tools built in Rust—specifically using the Arty library (the Rust implementation of Tor)—can provide more robust and private data collection. The workflow begins with bulk collection, where data is gathered into a local cache. This approach not only bypasses rate limits but also protects the investigator's intent by burying specific targets within a larger dataset. Once collected, the data is enriched using local LLMs (such as Qwen 30B) running on frameworks like VLLM or Llama.cpp. These models are used to identify salient points of interest and map them to standard ontologies like FOAF (Friend of a Friend). This process transforms unstructured HTML or text into structured 'triples' that can be stored in a triple store database. The presenter demonstrates a case study where he analyzed the Recon Village speaker list. By using an LLM to parse the site's dynamic content, he identified speakers that manual analysis had missed. He then cross-referenced this list with Wikipedia data via Wikidata to determine the founding dates and characteristics of the speakers' affiliated companies. This join was made seamless by the use of RDF and SPARQL, the standard query language for graph data. The talk concludes by emphasizing the 'virtuous cycle' of this approach: as more data is collected and structured locally, the investigator's internal library grows, enabling increasingly complex cross-source analysis without further external exposure. This method represents a significant shift from 'search' to 'structured analytics,' providing a scientifically repeatable framework for intelligence gathering.

More from this Playlist

DEF CON 33 Recon Village - Mapping the Shadow War From Estonia to Ukraine - Evgueni Erchov

DEF CON 33 Recon Village - How to Become One of Them: Deep Cover Ops - Sean Jones, Kaloyan Ivanov

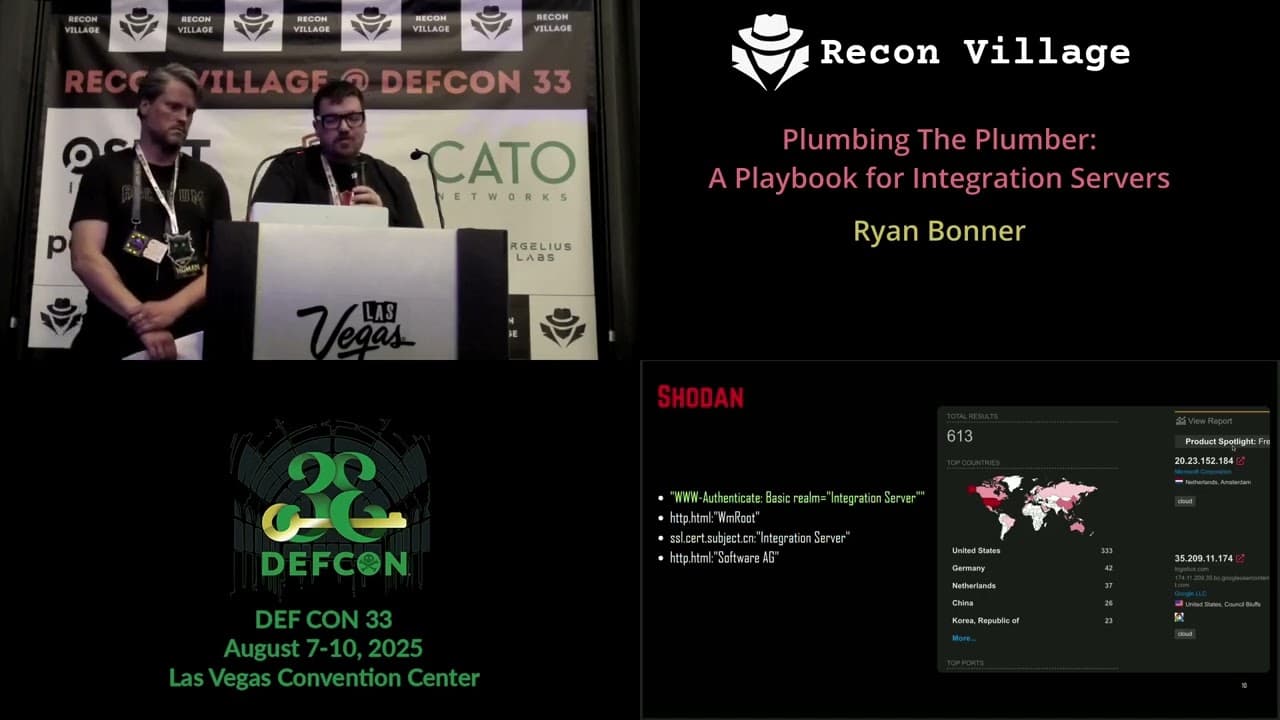

DEF CON 33 Recon Village - A Playbook for Integration Servers - Ryan Bonner, Guðmundur Karlsson

DEF CON 33 Recon Village - Autonomous Video Hunter AI Agents for Real Time OSINT - Kevin Dela Rosa