DEF CON 33 Recon Village - Robin The Archaeologist of the Dark Web - Apurv Singh Gautam

Description

Apurv Singh Gautam introduces Robin, an AI-powered Dark Web OSINT tool designed to automate the discovery, filtering, and analysis of onion services. The tool streamlines complex investigations by using Large Language Models (LLMs) to refine search queries, prioritize relevant links, and generate structured intelligence reports from scraped data.

REVOLUTIONIZING DARK WEB OSINT WITH ROBIN: THE AI-POWERED ARCHAEOLOGIST

Introduction The Dark Web remains a primary hub for cybercriminal activity, hosting everything from ransomware leak sites to illicit marketplaces. For threat intelligence analysts, navigating this ecosystem is often a manual, tedious process. At DEF CON 33 Recon Village, Apurv Singh Gautam, a Senior Threat Research Analyst at Cyble, unveiled Robin—an AI-powered Dark Web OSINT tool designed to automate the heavy lifting of 'Dark Web archaeology.' By integrating Large Language Models (LLMs) into the reconnaissance pipeline, Robin transforms fragmented data collection into a streamlined, automated intelligence-gathering process. This post explores the architecture, methodology, and practical applications of Robin in modern security research.

Background & Context Dark Web OSINT is notoriously difficult due to the volatile nature of .onion services. Links go down frequently, search engines are often unreliable, and the signal-to-noise ratio is incredibly low. Traditionally, a researcher would have to manually collect links from various aggregators, check their status, scrape the content, and then spend hours synthesizing that information into a coherent report. Apurv noted that while many tools exist for these tasks individually—such as specialized crawlers or link scrapers—they are often written in different languages (Python, Go, etc.) and do not communicate with each other. This fragmentation creates a 'tool-switching tax' that slows down critical investigations. Robin was built to bridge these gaps, providing a unified framework that leverages AI to handle the nuances of natural language and data synthesis.

Technical Deep Dive

The Architecture of Automated Reconnaissance

Robin operates as a multi-stage pipeline designed to handle the entire lifecycle of a Dark Web investigation. The process starts at either the CLI or Web UI, where a user inputs a search query. Instead of sending raw keywords directly to search engines, Robin uses its first LLM integration to refine the query. This ensures that a search for 'ransomware' is expanded into targeted queries for leak sites, threat actor handles, or specific criminal forums.

Once refined, Robin queries 15 separate Onion search engines simultaneously. This wide net typically catches over 200 results. To prevent overwhelming the scraper (and the analyst), Robin employs a second LLM stage to filter these results. This stage is critical; it identifies the most relevant links based on the original investigative intent. Apurv highlighted a specific 'index-based' prompting technique to prevent the LLM from hallucinating links, forcing the model to select from a provided list rather than generating new, potentially fake URLs.

Engineering Reliable AI Prompts

The core strength of Robin lies in its prompt engineering. Apurv shared that getting an LLM to reliably output data for a script requires highly specific instructions. For example, to ensure the filtering stage works, the prompt must demand 'only the indices' of the relevant links.

The final stage is the 'Investigation Report' generator. This prompt is the most complex, instructing the AI to parse raw scraped HTML and extract specific artifacts. Robin looks for:

- Threat Actor Aliases: Identifying known or new handles.

- Cryptocurrency Addresses: Automatically extracting Bitcoin or Monero wallets for financial tracking.

- PII and Metadata: Finding emails, names, or other identifying information.

- Contextual Insights: Summarizing the site's purpose and recent activity.

Tools and Integration

Robin is designed for flexibility. It supports the 'Big Three' LLMs—OpenAI, Claude, and Gemini. Crucially for privacy-conscious researchers, it also supports local models via Ollama, allowing the analysis to stay entirely on the researcher's hardware. The backend manages the Tor connection to ensure all traffic is properly routed through the Onion network, maintaining the anonymity of the researcher throughout the process.

Mitigation & Defense From a defensive standpoint, tools like Robin allow Blue Teams and Threat Intelligence units to stay ahead of the curve. By automating the monitoring of ransomware leak sites or credential marketplaces, organizations can identify data breaches faster. The 'next steps' feature in Robin's reports provides actionable intelligence, such as specific threat actor names to hunt for in internal logs or new cryptocurrency addresses to monitor via blockchain analysis tools. For defenders, the key is to use these automated insights to prioritize patching and incident response efforts based on real-world evidence found on the Dark Web.

Conclusion & Key Takeaways Robin represents a significant leap forward in Dark Web reconnaissance. By offloading the repetitive tasks of link filtering and data synthesis to AI, analysts can focus on high-level strategy and attribution. The primary takeaways from Apurv's presentation are the importance of consolidated tooling and the power of well-engineered LLM prompts in reducing investigative overhead. As Dark Web threats continue to evolve, automated tools that can scale with the volume of data will become indispensable. You can find Robin on GitHub and begin your own automated Dark Web investigations today. Remember: always conduct research ethically and within the legal frameworks of your jurisdiction.

AI Summary

In this presentation at DEF CON 33's Recon Village, Apurv Singh Gautam, a senior threat research analyst at Cyble and contributor to SANS cybercrime courses, presents his tool 'Robin'. The talk focuses on the challenges of performing Open Source Intelligence (OSINT) on the Dark Web, specifically within the Tor (.onion) ecosystem. Apurv explains that traditional Dark Web research is often fragmented, requiring researchers to jump between multiple disjointed tools for link collection, status checking, scraping, and final report writing. Robin was developed to consolidate these steps into a single, automated workflow enhanced by Artificial Intelligence. The architecture of Robin is built around a multi-stage pipeline. It begins with the user entering a query, which is then refined by an LLM to optimize search performance. This refined query is sent to 15 different Onion search engines, often returning hundreds of results. To manage this volume, a second LLM prompt filters the results down to the top 20 most relevant links using a specific 'index method' to prevent hallucination. The tool then scrapes these selected sites and passes the raw data to a final LLM stage that generates a comprehensive investigation report. This report specifically categorizes artifacts such as PII, cryptocurrency addresses, and threat actor aliases, while also providing 'next step' recommendations for further investigation. A significant portion of the talk is dedicated to the engineering of LLM prompts. Apurv shares insights on the trial and error required to make the tool reliable, such as instructing the model to output only specific indices or keywords to maintain script compatibility. He highlights the difficulty of processing large sets of URLs and titles, which led to the implementation of tabular formats for the LLM to process internally. The tool supports both a Command Line Interface (CLI) and a Web User Interface (UI), and it is compatible with major LLM providers including OpenAI, Claude, Gemini, and local models via Ollama. The presentation concludes with a demonstration of the tool's output, showing how it transforms raw scraped data into a structured Markdown report. Future updates for Robin include maintaining session continuity between queries, adding safe search options to the UI, and expanding support for newer LLM models. Apurv emphasizes that while Robin significantly reduces the time required for Dark Web research, human analysts remain critical for verifying the findings and following up on the generated leads.

More from this Playlist

DEF CON 33 Recon Village - Mapping the Shadow War From Estonia to Ukraine - Evgueni Erchov

DEF CON 33 Recon Village - How to Become One of Them: Deep Cover Ops - Sean Jones, Kaloyan Ivanov

DEF CON 33 Recon Village - Building Local Knowledge Graphs for OSINT - Donald Pellegrino

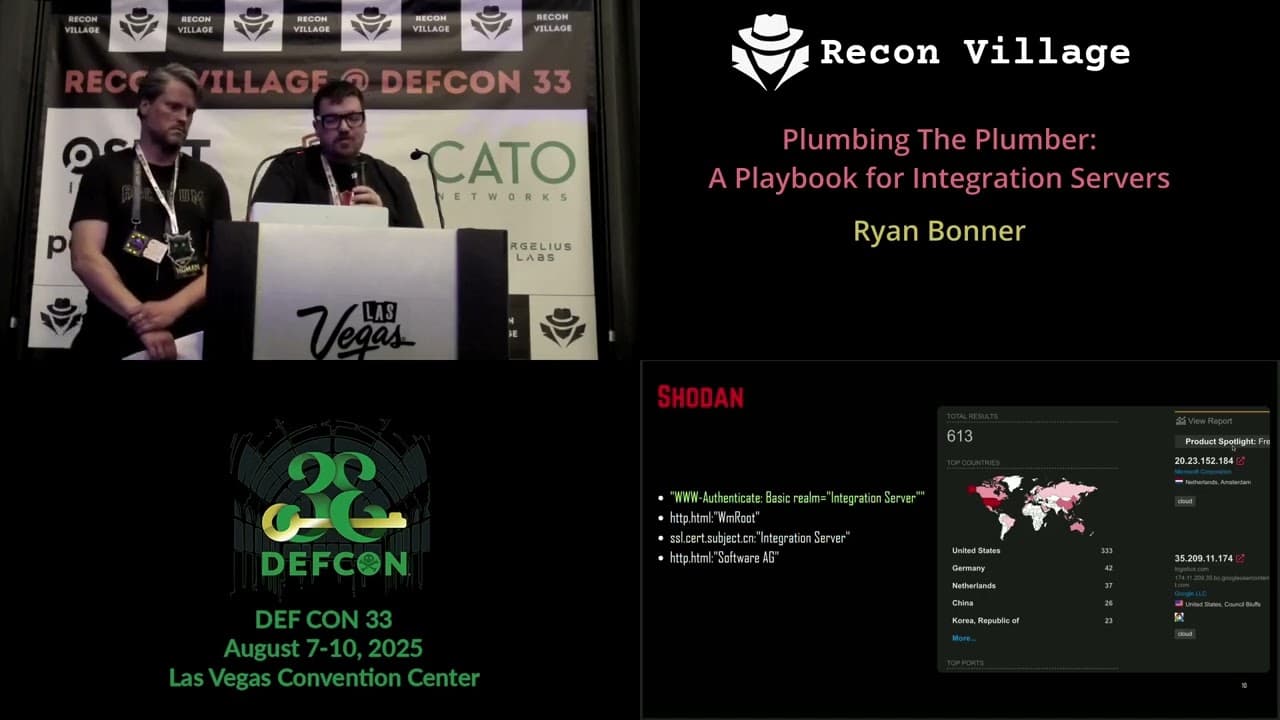

DEF CON 33 Recon Village - A Playbook for Integration Servers - Ryan Bonner, Guðmundur Karlsson