DEF CON 33 Recon Village - enumeraite: AI Assisted Web Attack Surface Enumeration - Özgün Kültekin

Description

Özgün Kültekin introduces 'enumeraite,' an AI-assisted tool designed to overcome the limitations of static wordlists in web reconnaissance. The presentation demonstrates how Large Language Models (LLMs) can predict hidden subdomains and API endpoints by understanding complex naming conventions and semantic patterns.

Beyond the Wordlist: AI-Assisted Web Attack Surface Enumeration

Introduction

In the world of professional penetration testing and bug hunting, reconnaissance is often the factor that separates a successful engagement from a fruitless one. However, as modern web architectures move toward massive microservice ecosystems and complex cloud deployments, the traditional 'brute force' approach to discovery is reaching its limits. During his DEF CON 33 talk, security researcher Özgün Kültekin (Aussie) unveiled a paradigm shift: moving away from static wordlists toward AI-driven enumeration with his tool, enumeraite.

This isn't about the 'AI hype' of simply asking a chatbot to find bugs. It is a technical deep dive into how Large Language Models (LLMs) can be fine-tuned to understand the 'language' of subdomains and API endpoints, allowing researchers to find the hidden assets that traditional tools consistently overlook. Whether you are a red teamer or a bug bounty hunter, understanding this shift is crucial for staying relevant in an increasingly automated landscape.

The Problem: The Static Wordlist Wall

For years, tools like ffuf, gobuster, and nmap have been the workhorses of recon. They rely on wordlists like SecLists or Assetnote. While these are invaluable resources, they suffer from a fundamental flaw: they are generic. A wordlist created in 2021 won't know that a specific target uses MDN to represent a data center in Maiden, North Carolina, or that their developers use _CRT as an abbreviation for 'Create' in their private APIs.

This leads to 'Shadow IT'—assets that exist but are never tested because they don't fit the common patterns. Furthermore, traditional brute-forcing is noisy and often triggers rate-limiting or WAF blocks. To find the truly high-impact vulnerabilities, we need to fuzz smarter, not harder. We need tools that can look at a handful of discovered endpoints and 'dream up' the likely siblings based on the target's internal logic.

Technical Deep Dive

Understanding Semantic Proximity

At the heart of AI-assisted enumeration is the concept of distributional semantics. LLMs turn words (or tokens) into high-dimensional vectors. In this vector space, words that appear in similar contexts are placed closer together. Kültekin demonstrates that an LLM understands that /api/v1/user_CRT and /api/v1/adm_CRT are semantically related even if they share few characters. They both represent an [Entity] followed by a [Shortened Action]. By training models on millions of real-world subdomains and paths, the model learns the "grammar" of infrastructure.

The Agentic Workflow

enumeraite utilizes an 'agentic' structure rather than a single prompt. This process involves several specialized steps:

- Pattern Inference: An agent analyzes a known-good subdomain (e.g.,

activate-iphone-us1-cx02.apple.com) and deconstructs it into slots:[Action]-[Product]-[Region]-[ClusterID]. - Slot Filling: A second agent, fine-tuned on corporate naming conventions, suggests alternatives for each slot. For 'Product,' it might suggest

ipad,mac, orwatch. For 'Region,' it predicts other data center codes. - Combinatorial Generation: The tool generates the cross-product of these realistic variations, creating a highly targeted list of candidates for validation.

Step-by-Step Implementation Strategy

To implement this approach in your own workflow, follow these conceptual steps:

- Initial Recon: Use standard tools (

subfinder,waybackurls) to gather a baseline of 10-20 legitimate subdomains or paths. - Contextual Seeding: Feed these results into a specialized LLM (like the Qwen 4B model provided by

enumeraite). - Pattern Extraction: Ask the model to identify the naming convention (e.g., 'Target uses 3-letter airport codes for environment regions').

- Targeted Fuzzing: Use the AI-generated list as a custom wordlist for tools like

httpxordnsxto verify which candidates actually resolve.

Mitigation & Defense

From a blue team perspective, AI-driven enumeration is a nightmare because it looks much more like legitimate traffic than a standard brute-force attack. To defend against this:

- Normalize Naming Conventions: Avoid using predictable abbreviations or internal regional codes in public-facing DNS.

- Zero Trust Architecture: Ensure that finding a 'hidden' subdomain doesn't equate to access. Implement strong authentication on every endpoint, regardless of how obscure the URL is.

- Behavioral Analysis: Monitor for 'low and slow' discovery patterns that skip through valid-looking but non-existent endpoints.

Conclusion & Key Takeaways

The 'enumeraite' research proves that AI's greatest strength in cybersecurity isn't replacing the hacker, but enhancing the hacker's intuition at scale. By moving from static wordlists to dynamic, context-aware generators, we can uncover a significantly larger portion of the attack surface.

Key Takeaways:

- Static wordlists are no longer sufficient for modern, complex targets.

- LLMs are uniquely suited for recon because they excel at next-token prediction and semantic understanding.

- Agentic workflows allow for the automation of complex manual reverse-engineering of naming patterns.

- Practicing safe and ethical disclosure is paramount when using these advanced techniques to find hidden assets.

You can find the models and the enumeraite tool on GitHub to begin incorporating AI-assisted recon into your own security research.

AI Summary

In this DEF CON 33 Recon Village presentation, Özgün Kültekin (Aussie), an offensive security engineer at Trendyol Group, discusses the evolving challenges of web attack surface enumeration. He highlights the problem of 'Shadow IT' and the sheer scale of modern microservice architectures, using Netflix's complex infrastructure as an example. Traditional reconnaissance methods often rely on static wordlists like SecLists, which fail to capture target-specific naming conventions, regional abbreviations, or internal-only subdomains that aren't indexed by public search engines or certificates. Kültekin argues that while manual reverse-engineering of naming logic is effective, it is not scalable. The core of the presentation focuses on the application of Artificial Intelligence, specifically Large Language Models (LLMs), to solve the 'enumeration hell.' Kültekin explains the technical mechanics behind this approach, focusing on next-token prediction and distributional semantics. By converting subdomains and URL paths into vectors, LLMs can identify semantic similarities. For example, a model understands that 'ADM' is more closely related to 'USER' than 'CSS' because of their functional context. This allow tools to generate high-probability candidates for fuzzing that a static wordlist would miss. Kültekin compares different AI architectures, including LSTMs (Long Short-Term Memory) and Transformers. While LSTMs are useful for sequential patterns, they lack the 'intelligence' to understand broader context. Modern Transformers, like the Qwen 1.5 4B model used in his tool 'enumeraite,' can process longer dependencies and understand company-specific patterns. He demonstrates this with a real-world Apple subdomain example where a model correctly identified 'MDN' as an abbreviation for 'Maiden, North Carolina' by analyzing surrounding context (RNO for Reno, NWK for Newark). The speaker introduces an 'agentic' structure for his tool. This workflow involves breaking a known subdomain into symbolic slots (e.g., [Action]-[Product]-[Region]). One LLM identifies the pattern, and another generates realistic variations based on the target's business logic (e.g., swapping 'iPhone' for 'iPad' or 'Mac' in a diagnostic URL). He shares a personal success story where AI helped him discover a hidden API endpoint (`user_RMV`) after finding a legitimate one (`user_CRT`), leading to a successful bug bounty for unauthorized account deletion. The talk concludes with the announcement of the open-sourcing of 'enumeraite' and its underlying fine-tuned models.

More from this Playlist

DEF CON 33 Recon Village - Mapping the Shadow War From Estonia to Ukraine - Evgueni Erchov

DEF CON 33 Recon Village - How to Become One of Them: Deep Cover Ops - Sean Jones, Kaloyan Ivanov

DEF CON 33 Recon Village - Building Local Knowledge Graphs for OSINT - Donald Pellegrino

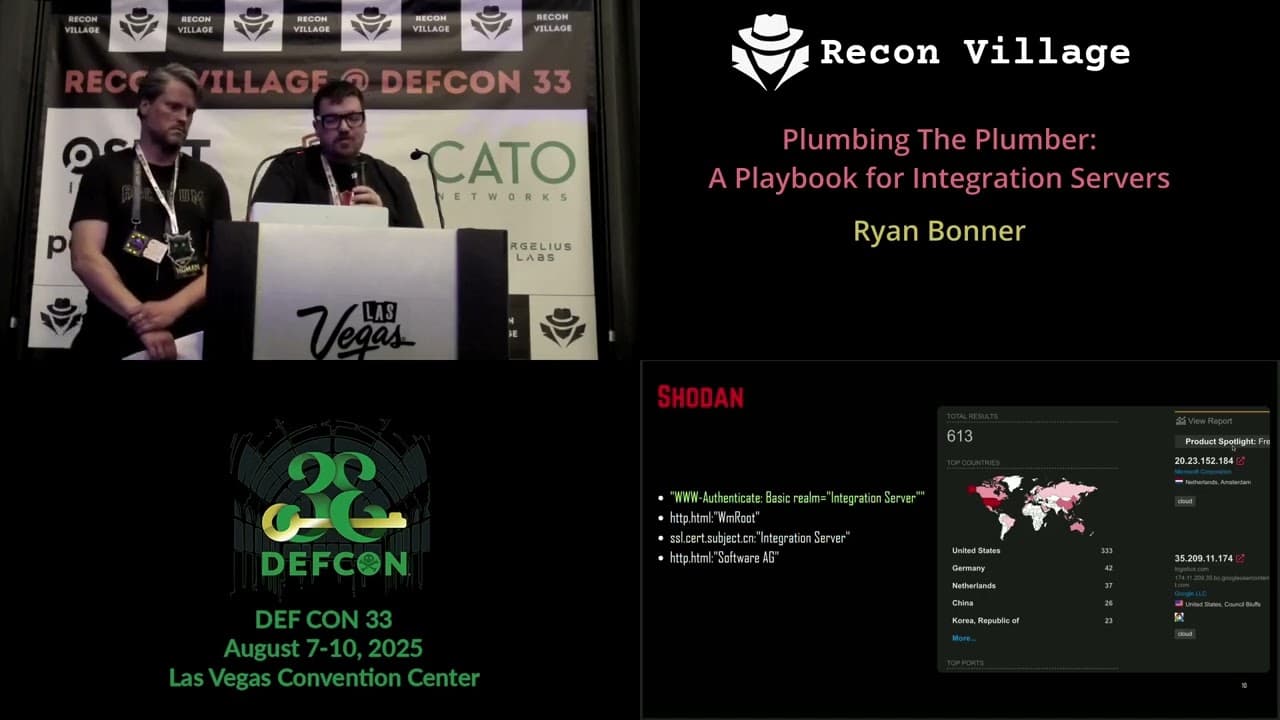

DEF CON 33 Recon Village - A Playbook for Integration Servers - Ryan Bonner, Guðmundur Karlsson